Automating Nightly Backups for Postgres Databases in Kubernetes with PG-BKUP

I'm a Software Engineer passionate about building scalable systems and simplifying infrastructure through open source. With over 5 years of hands-on experience, I specialize in Go (Golang), Kotlin, Spring Boot, and Linux-based systems, with a strong DevOps foundation in Docker, Kubernetes, and CI/CD automation.

I'm the author of several open source tools, including:

- PG-BKUP – PostgreSQL backup and restore

- MYSQL-BKUP – MySQL backup and restore solution

- Goma Gateway – Declarative API Gateway and reverse proxy

- Okapi – Fast and extensible web framework in Go

My focus is on building developer-friendly tools that are lightweight, portable, and production-ready.

Areas of Focus:

- Cloud-native architecture & API design

- Developer tooling & platform engineering

- DevOps, GitOps, and SRE best practices

- Secure infrastructure & automation workflows

Open source is at the core of my work. I thrive in both independent and collaborative environments, always aiming for clean code, thoughtful design, and real-world impact.

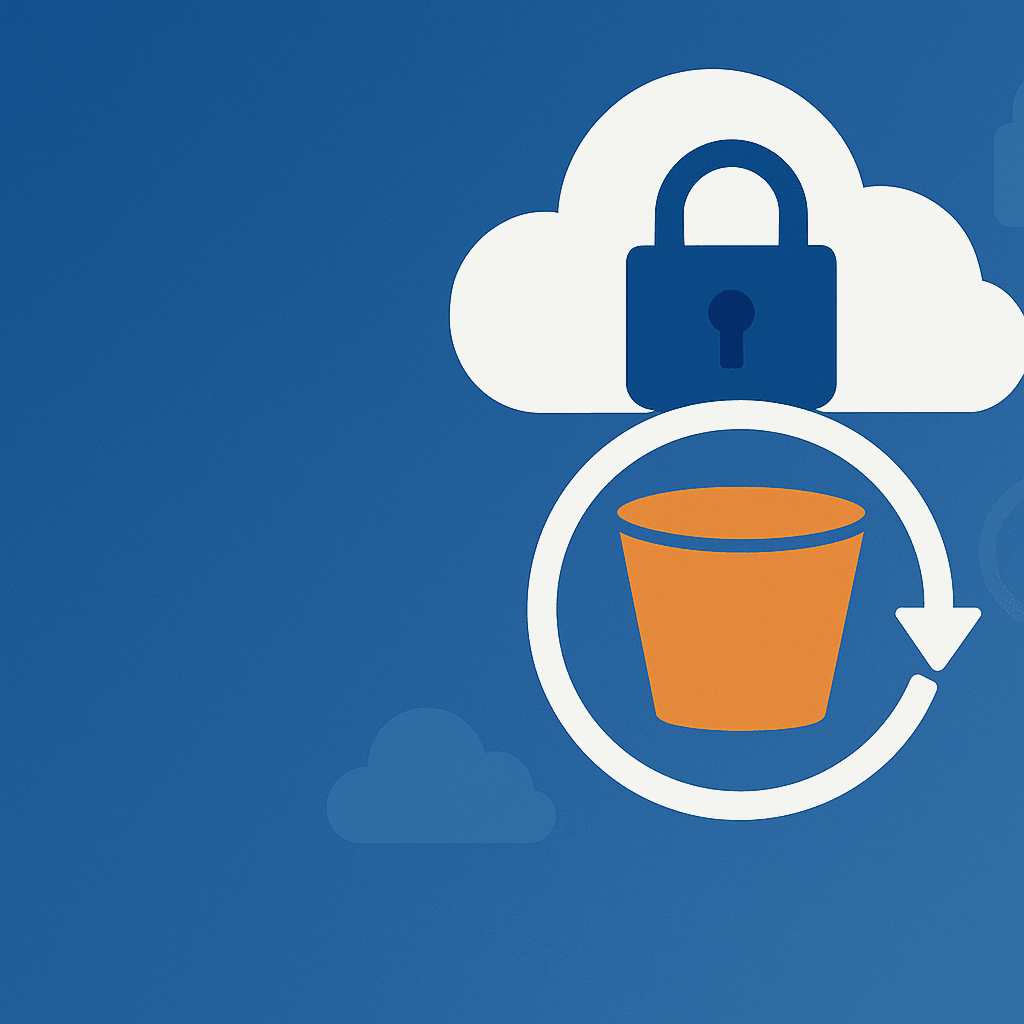

Backing up your data is a critical practice, especially when managing databases in Kubernetes environments. Regular backups of your Postgres database can prevent data loss, ensure business continuity, and provide peace of mind. This guide provides a comprehensive walkthrough on automating nightly backups for a Postgres database running inside a Kubernetes container using the PG-BKUP tool.

PG-BKUP is a powerful container image designed to simplify the backup, restore, and migration of PostgreSQL databases. It supports multiple storage options, including local storage, S3, SFTP, and Azure Blob, and ensures data security through GPG encryption. Whether you're managing a single database or multiple databases, PG-BKUP offers the flexibility and reliability you need.

Prerequisites

Before diving into the backup process, ensure the following are in place:

- A running Kubernetes cluster.

- A PostgreSQL database deployed in Kubernetes.

- (Optional) S3-compatible, SFTP, or Azure Blob storage for remote backups.

Backup Strategies with PG-BKUP

PG-BKUP can be deployed on Kubernetes as a Job, CronJob, or Deployment using its integrated scheduled mode. Below, we’ll explore two common approaches: using a CronJob for scheduled backups and a Deployment for backing up multiple databases.

1. Backup Using a Kubernetes CronJob

A CronJob is ideal for scheduling regular backups, such as nightly backups. Here’s how to set it up:

Step 1: Create a Kubernetes Secret

Store sensitive information like database credentials and AWS keys in a Kubernetes Secret. Use the following commands to encode your credentials:

echo -n "username" | base64

echo -n "password" | base64

Then, create a Kubernetes Secret YAML file:

apiVersion: v1

kind: Secret

metadata:

name: backup-secret

type: Opaque

data:

username: dXNlcm5hbWU= # Replace with your base64-encoded username

password: cGFzc3dvcmQ= # Replace with your base64-encoded password

aws_access_key_id: YWJjZGVmZ2hpamtsbW5vcHFyc3R1dnd4 # Replace with your base64-encoded AWS access key

aws_secret_access_key: YWJjZGVmZ2hpamtsbW5vcHFyc3R1dnd4 # Replace with your base64-encoded AWS secret key

Step 2: Define the CronJob

Create a CronJob YAML file to schedule nightly backups:

apiVersion: batch/v1

kind: CronJob

metadata:

name: backup-job

spec:

schedule: "0 0 * * *" # Runs at midnight every day

jobTemplate:

spec:

template:

spec:

containers:

- name: pg-bkup

image: jkaninda/pg-bkup

command:

- /bin/sh

- -c

- backup --storage s3 --path /backups

env:

- name: DB_PORT

value: "5432"

- name: DB_HOST

value: "postgres"

- name: DB_NAME

value: "mydb"

- name: DB_USERNAME

valueFrom:

secretKeyRef:

name: backup-secret

key: username

- name: DB_PASSWORD

valueFrom:

secretKeyRef:

name: backup-secret

key: password

- name: AWS_S3_ENDPOINT

value: "https://s3.amazonaws.com"

- name: AWS_S3_BUCKET_NAME

value: "mybucket"

- name: AWS_REGION

value: "us-east-1"

- name: AWS_DISABLE_SSL

value: "false"

- name: AWS_FORCE_PATH_STYLE

value: "false"

- name: AWS_ACCESS_KEY_ID

valueFrom:

secretKeyRef:

name: backup-secret

key: aws_access_key_id

- name: AWS_SECRET_ACCESS_KEY

valueFrom:

secretKeyRef:

name: backup-secret

key: aws_secret_access_key

restartPolicy: Never

Optional: Enable Backup Encryption

PG-BKUP supports GPG encryption for added security. To encrypt your backups, include the GPG_PASSPHRASE or GPG_PUBLIC_KEY environment variable in the CronJob configuration.

2. Backup Multiple Databases Using a Deployment

If you’re managing multiple databases, you can use a single Kubernetes Deployment to back them up. Here’s how:

Step 1: Create a ConfigMap

Define the configuration for multiple databases in a ConfigMap:

apiVersion: v1

kind: ConfigMap

metadata:

name: backup-config

data:

config.yaml: |

cronExpression: "@daily" # Optional: Schedule backups daily

databases:

- host: postgres1

port: 5432

name: database1

user: database1

password: password

path: /s3-path/database1

- host: postgres2

port: 5432

name: lldap

user: lldap

password: password

path: /s3-path/lldap

- host: postgres3

port: 5432

name: keycloak

user: keycloak

password: password

path: /s3-path/keycloak

- host: postgres4

port: 5432

name: joplin

user: joplin

password: password

path: /s3-path/joplin

Step 2: Define the Deployment

Create a Deployment YAML file to manage the backups:

apiVersion: apps/v1

kind: Deployment

metadata:

name: backups

spec:

selector:

matchLabels:

app: backups

template:

metadata:

labels:

app: backups

spec:

containers:

- name: pg-bkup

image: jkaninda/pg-bkup

command: ["backup", "--storage", "s3", "--path", "/backups", "--config", "/config/config.yaml"]

resources:

limits:

memory: "128Mi"

cpu: "500m"

env:

- name: AWS_S3_ENDPOINT

value: "https://s3.amazonaws.com"

- name: AWS_S3_BUCKET_NAME

value: "mybucket"

- name: AWS_REGION

value: "us-east-1"

- name: AWS_DISABLE_SSL

value: "false"

- name: AWS_FORCE_PATH_STYLE

value: "false"

- name: AWS_ACCESS_KEY_ID

valueFrom:

secretKeyRef:

name: backup-secret

key: aws_access_key_id

- name: AWS_SECRET_ACCESS_KEY

valueFrom:

secretKeyRef:

name: backup-secret

key: aws_secret_access_key

volumeMounts:

- name: config

mountPath: "/config"

readOnly: true

volumes:

- name: config

configMap:

name: backup-config

Optional: Enable Notifications

If you enable backupRescueMode (which allows backups to proceed even if one database is down), consider setting up notifications to alert you of any backup failures.

Conclusion

Automating Postgres database backups in Kubernetes using PG-BKUP is a robust and efficient way to ensure your data is secure and recoverable. With support for multiple storage options (local, S3, SFTP, and Azure Blob) and GPG encryption, PG-BKUP provides unparalleled flexibility and security. By implementing these strategies, you can safeguard your critical data and minimize downtime in case of unexpected failures.

For more detailed instructions and advanced configurations, explore the official documentation: PG-BKUP Documentation.